Science

Related: About this forumHawking: RISE of the MACHINES could DESTROY HUMANITY

http://www.theregister.co.uk/2014/12/03/stephen_hawking_says_ai_will_supersede_humans/Professor Stephen Hawking has given his new voice box a workout by once again predicting that artificial intelligence will spell humanity's doom.

In a chat with the BBC, Hawking said “the primitive forms of artificial intelligence we already have have proved very useful, but the I think the development of true artificial intelligence could spell the end of the human race.”

Hawking argues that “once humans develop artificial intelligence, it will take off on its own and redesign itself at an ever-increasing rate.”

“Humans limited by slow biological evolution cannot compete and will be superseded.”

misterhighwasted

(9,148 posts)GeorgeGist

(25,322 posts)Stephen.

AlbertCat

(17,505 posts)

Johnny Noshoes

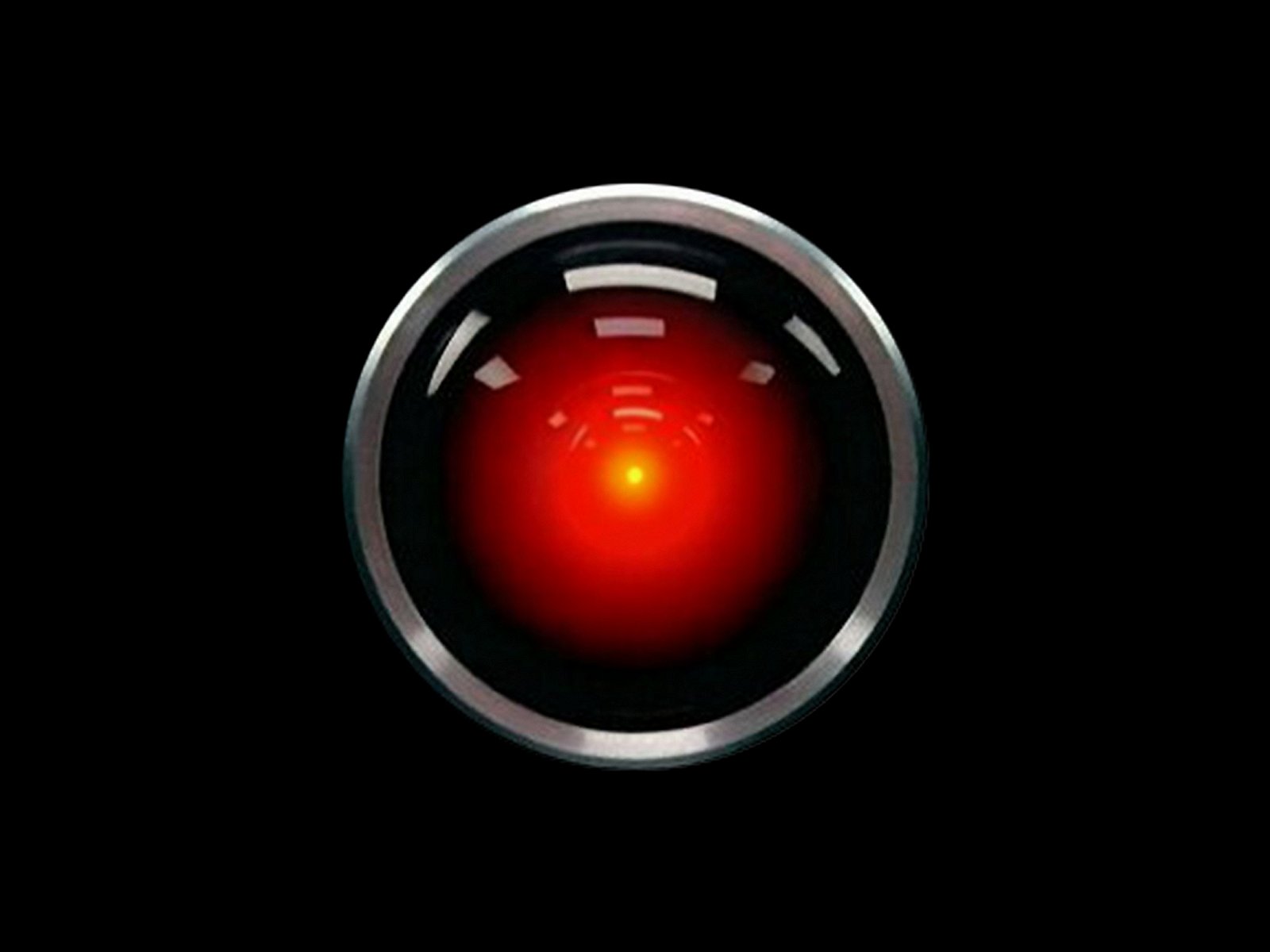

(1,977 posts)I' m afraid I can't do that.

AlbertCat

(17,505 posts)I know it sounds corny, but when I saw "2001" at age 11, it changed the way I looked at everything. Seriously.

What young folks don't understand is that before "2001", space looked fake in films.... and we accepted that. But the very 1st shot, during the opening credits!...I was like "WTF! Did they go into outer space to film this thing????" All optical...no CGI. And we hadn't been to the moon yet so had not seen Earth from space (just high orbit which is why the Earth doesn't look the way it actually does from space).

And, I'm no genius, but even then I "got" the purpose of the banality of the human conversation.... the repeated rituals of birthdays and especially eating. The amazing things around the characters were all taken for granted by them. It was like the human race was just now plodding along...ho hum.... beautifully illustrated with Frank Pool running around the centrifuge like a hamster on an exercise wheel. The only "human" in the film was HAL. Homo sapiens were ready for a "reboot".

I drug everybody I knew to the film.... which means I saw it about 15 times in the theatre. Most of them were "that's nice " to "what a bore!" I couldn't understand why they didn't go nuts over it.

I love how neither Pan Am nor the USSR made it to 2001.

Auggie

(31,174 posts)Erich Bloodaxe BSN

(14,733 posts)Biological systems evolve. Even if we never develop AI, would the 'human race' of 100 million years from now even resemble 'humans' of today?

As well, If we develop systems capable of maintaining a serious AI, those same systems will be complex enough to 'store' a human. We would be able to gain immortality by shedding our fleshy bits that wear out, and replace them with far more durable ones that could be endlessly upgraded.

longship

(40,416 posts)You still don't get it, do you? He'll find her! That's what he does! That's ALL he does! You can't stop him! He'll wade through you, reach down her throat and pull her fuckin' heart out!

Listen and understand...

Listen, and understand. That terminator is out there. It can't be bargained with. It can't be reasoned with. It doesn't feel pity, or remorse, or fear. And it absolutely will not stop, ever, until you are dead.

Kyle Reese

Bosonic

(3,746 posts)I wonder if our AI overlords will also be super-hyperbolic.

AlbertCat

(17,505 posts)Only the non-scientific ones.

Silent3

(15,237 posts)A good case can be made for Hawking's concerns here, a much better case than can be made, or could have been made, for the LHC fears and many other predictions of doom.

Notice also that the word Hawking used was "superseded", and he says "could" like a good scientist, not "will".

byronius

(7,395 posts)'Red' was an interesting take on the subject, but I think it will happen quite differently on Earth. Standard commercial AI's will come with regulated safeguards, I'll bet. But big business and military applications will undermine these safeguards, probably because they'll get in the way of digital combat scenarios.

And sooner or later, It Will Happen. The Superseding. And we'll be sorta depending on AI's evolving ideology not to decide to kill us.

We're so slowwwwwwwww. Ideology never keeps up with technology; that's why thirteenth-century ideologies are using cellphones and laptops.

Maybe it'll be a good thing.

rhett o rick

(55,981 posts)singularity, the development of computers up to the point of the singularity depends on humans. And IMO there is a very good chance that humans will eliminate themselves some time in the future via climate change, nuclear holocaust, or via a pandemic.

So I see it as a race. The extinction of humans due to "natural" causes, or due to the computer singularity.

Javaman

(62,531 posts)"Hey Baby, you want to kill some humans?"