NNadir

NNadir's JournalGeological Work Begins on Poland's First Nuclear Plant.

Geological work begins on Poland’s first nuclear plantExcerpts:

Bechtel will conduct in-depth geological surveys at the Lubiatowo-Kopalino site in the Pomeranian municipality of Choczewo, in northern Poland. This is a key milestone for the country’s entry into nuclear power production, as the surveys will inform the suitability of the planned site...

...Background: Westinghouse Electric Company announced last September its partnership with Bechtel to design and construct Poland’s first nuclear plant, in coordination with Polish utility Polskie Elektrownie Jądrowe. The goal is to deploy six Westinghouse AP1000 units at the site, producing enough energy to power 13 million households...

...Quotable: “We are celebrating a major milestone in the U.S.-Polish special friendship—the inauguration of field activities on the nuclear power plant construction site in Lubiatowo-Kopalino and the opening of Bechtel office in Warsaw,” said Mark Brzezinski, U.S. ambassador to Poland. “It’s another important step forward as Poland and the United States work together to create a civil nuclear industry in Poland, and it shows that the United States is delivering on our shared commitment to Poland’s energy security and supporting Poland’s energy transition. The selection of Westinghouse and Bechtel—two gold standard American companies—to advance Poland’s civil nuclear power program brings energy security to the core of Polish-American cooperation.”

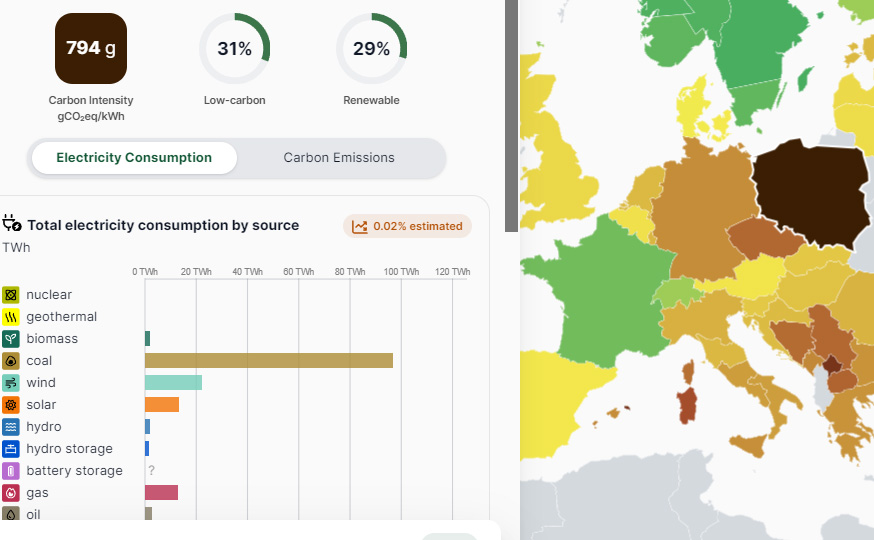

Poland consistently has the worst carbon intensity for electricity in Europe, 794 g CO2/kWh over the last year, even worse than Germany's 400 g CO2/kWh, and very far from France's 53 g CO2/kWh.

Source: Electricity Map, Poland (accessed 4/28/24)

This said, Poland apparently is going to do something about this, planning to become more like France, which historically displaced coal with nuclear and less like Germany which replaced nuclear with coal.

This of course, is good for the health of the Polish people, and good for the world at large. Unlike the Germans, Poland is concerned with climate change.

Enjoy the rest of the weekend.

A New Record Concentration for CO2, 427.98 ppm Has Been Set for the Mauna Loa CO2 Observatory's Weekly Average.

As I've indicated repeatedly in my DU writings, somewhat obsessively I keep spreadsheets of the of the daily, weekly, monthly and annual data at the Mauna Loa Carbon Dioxide Observatory, which I use to do calculations to record the dying of our atmosphere, a triumph of fear, dogma and ignorance that did not have to be, but nonetheless is, a fact.

Facts matter.

When writing these depressing repeating posts about new records being set, reminiscent, over the years, to the ticking of a clock at a deathwatch, I often repeat some of the language from a previous post on this awful series, as I am doing here with some modifications. It saves time.

Here's a recent post referring to weekly data:

A New Record Concentration for CO2, 426.35 ppm Has Been Set at the Mauna Loa CO2 Observatory.

Yesterday in another post I noted that March 2024 was the absolute worst month ever recorded with respect to increases over the previous year's average readings:

March 2024 Was the Worst Month Ever for CO2 Increases Measured at the Mauna Loa CO2 Observatory.

April 2024 is not likely to break the all time record for a monthly average (4.16 ppm higher) when comparing the averages of 2015 with 2016, but then again, it won't be pretty.

We now have the highest concentration ever recorded for a weekly average reading at the Observatory:

Week beginning on April 21, 2024: 427.94 ppm

Weekly value from 1 year ago: 423.96 ppm

Weekly value from 10 years ago: 401.62 ppm

Last updated: April 28, 2024

Weekly average CO2 at Mauna Loa

We have now completed the 16th week of 2024.

The increase over week 16 of 2023 is 3.98 ppm. It is the 28th highest such comparator out of 2517 such data points.

I've been at this for a long time, and I've never seen anything quite like the beginning of 2024, and the shock continues week after week of this year.

Of the top 50 highest readings of the difference between weeks of the year with those of the previous year out of the 2517 such data points, 16 have taken place in the last 5 years of which 8 occurred in 2024, 36 in the last 10 years, and 44 in this century. Of the six readings from the 20th century, four occurred in 1998, when huge stretches of the Malaysian and Indonesian rainforests caught fire when slash and burn fires designed to add palm oil plantations to satisfy the demand for "renewable" biodiesel for German cars and trucks as part of their "renewable energy portfolio" went out of control.

Week 5 (5.75 ppm higher than week 5 of 2023), week 7( 5.53 ppm higher than week 7 of 2023) , and week 10 (5.66 ppm higher than week 10 of 2023,) represent three of only four such week to week comparators with readings of the previous year to exceed a 5.00 ppm increase. The only other such an increase to exceed 5.00 ppm occurred in 2016, the previous "worst year ever" in CO2 accumulations, 5.04 ppm recorded in the week beginning July 31, 2016, week 28 when compared with week 28 of 2015.

The year is still young.

One of the other two readings of the 20th century, those not in 1998, to appear in the list of the 50 worst such increases is now the 49th highest, 3.79 ppm recorded during the week beginning 1/24/1999. The other reading from the 20th century to appear in the top 50 comparators with that of the previous year beginning 8/21/1988, (3.91 ppm over the same week of 1987, week 34) was the worst such week ever for ten years, until 1998. It is now the 32nd worst such week ever recorded.

Since the first week of the year 2000, the week beginning January 2 of that year, the increase in the concentration of the dangerous fossil fuel waste carbon dioxide in the collapsing planetary atmosphere has been 57.65 ppm.

Since I joined DU in late November 2022, the week beginning November 17, 2002, when, then as now, the main issue on my mind is the relationship between energy and the environment, the increase in the concentration of the dangerous fossil fuel waste CO2 has been 55.26 ppm. During the whole time I've been here, advocating for the use of nuclear energy to provide the bulk of the world's energy demand, I've been hearing from delusional fool after delusional fool, that we don't need nuclear energy because so called "renewable energy" is so great.

The expensive, land and mass intensive, and thus environmentally odious, fad for throwing trillions upon trillions of dollars in this century at so called "renewable energy" has done nothing other than to entrench the use of dangerous fossil fuels and to accelerate the accumulation of the dangerous fossil fuel waste carbon dioxide in the atmosphere: I keep a 52 week running average of comparators between the reading of a current week with that of 10 years previous. As of this morning, that average is 24.91 ppm/10 years, the highest such average ever observed. In 2000, when antinuke rhetoric was embraced world wide in favor the reactionary return to the early19th century dependence on the weather for energy, which is what so called "renewable energy" is, that average was 15.21 ppm/10 years.

The ten year comparator between week 16 of 2024 and week 16 of 2014 shows that 2024's reading is 26.32 ppm higher than that of 2014. This is the 6th highest 10 year comparator ever observed. Of the top 50 such 10 year comparators, all have taken place since 2016. This year, 2024, has produced 9 of those readings in the top fifty, 2023 produced another 8.

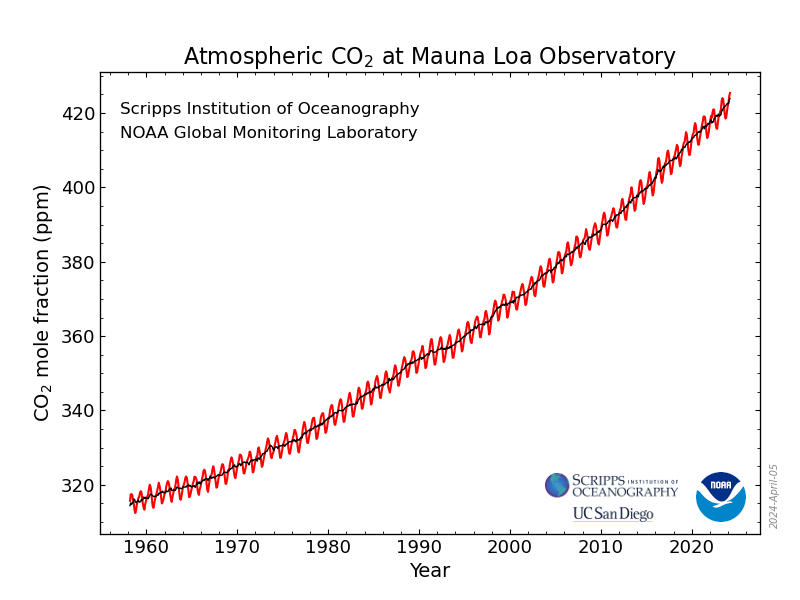

Using the rate of change of the rate of change (the 2nd derivative) of 10 year of average 52 week comparators, one can produce a second degree differential equation and using very simple calculus, integrate twice with respect to time, utilize the data for a current week as boundary conditions, and derive a simple quadratic equation as a crude model to make predictions of what the concentration is likely to be in following years. I justify this by stating that if one looks, one can see that the rate of accumulation is a sine wave superimposed on a quadratic axis:

Monthly Average Mauna Loa CO2

Solving this equation for the date that we will reach 500 ppm, using the data of week 16 of 2024 for the boundary conditions, one can see that if we do nothing to address climate change other than chant dogma about so called "renewable energy," the model predicts 500 ppm will be reached in the spring of 2046.

We hear all the time "by such and such year" soothsaying predictions about when the renewable energy nirvana will break out; I contend, and won't be dissuaded, it won't break out at all. When I was a young man it was "by 2000." It didn't happen. Now that I'm an old man, it's sometimes "by 2050." It won't happen. The model, based on data, not mindless soothsaying about how what's not working will work, predicts that "by 2050" the concentration of the dangerous and deadly fossil fuel waste CO2 will be around 520 ppm, nearly 90 ppm higher than it is now.

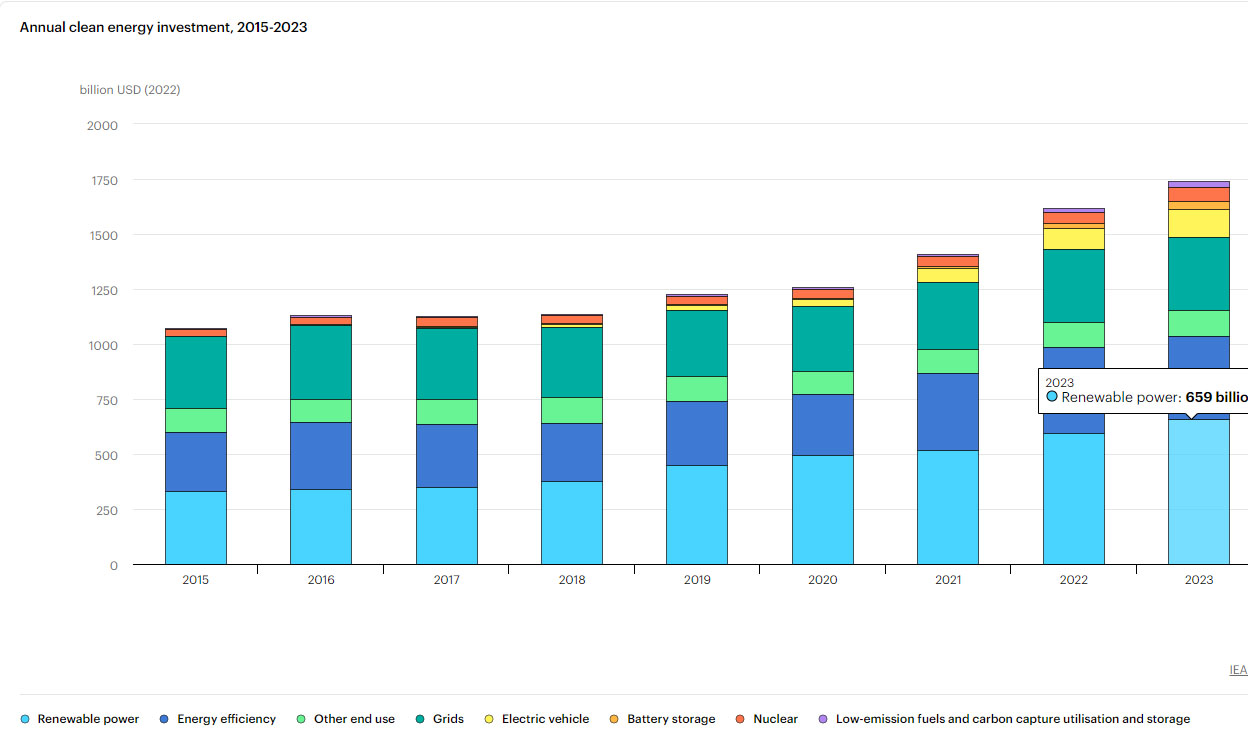

The amount of money spent on so called "renewable energy" since 2015 is 4.12 trillion dollars, compared to 377 billion dollars spent on nuclear energy, mostly to keep vapid cultists spouting fear and ignorance from destroying the valuable nuclear infrastructure.

IEA overview, Energy Investments.

The graphic is interactive at the link; one can calculate overall expenditures on what the IEA dubiously calls "clean energy."

Solar and wind, in an atmosphere of wild and insipid chanting, with wide world scale destruction of wilderness to accommodate industrial parks for them, produce slightly half what nuclear energy produces in an atmosphere of vituperation.

Destroying nuclear infrastructure is said in this scientific paper to kill people, mostly poor people:

Nuclear power generation phase-outs redistribute US air quality and climate-related mortality risk. Nat Energy 8, 492–503 (2023).

You can hear all the time from bourgeois cultists that so called "renewable energy" is cheap. It isn't cheap; it's unbelievably expensive, because it diverts resources from doing something realistic about climate change. Climate change is not cheap. It's incredibly expensive, unsustainably expensive. It's wholesale destruction of infrastructure will leave the world impoverished.

The antinukes won, and humanity, and the planet as a whole, lost.

Have a pleasant Sunday.

New CO2 Concentration Record Set, 428.59 ppm, at the Mauna Loa CO2 Observatory.

This refers to the daily readings:

April 26: 428.59 ppm

April 25: 428.19 ppm

April 24: 428.42 ppm

April 23: Unavailable

April 22: 427.21 ppm

Last Updated: April 27, 2024

Recent Daily Average Mauna Loa CO2

I normally refer to the weekly average readings to discuss new records. The preliminary data suggests that a new record for weekly average data, just shy of 428 ppm, but this is subject to confirmation. The observatory only started posting the preliminary data on Saturdays earlier this year; the final data is published on Sunday. I'll cover it here after confirmation.

I only started tracking daily data, in addition to weekly, monthly and annual data this year. The database goes back to 1975.

There are no values greater than 425 ppm ever recorded since 1975 recorded before 2024.

Forty-three days of 2024 have been higher than 425 ppm, 21 higher than 426 ppm, 9 higher than 427 ppm, and three greater than 428 ppm, the last three days. Yesterday is the highest ever recorded.

If any of this troubles you, don't worry be happy.

To distract yourself from this unpleasantness, I recommend going on your computer and send messages to blogs carrying on about Fukushima. You never know, someone someday might die as a result of radiation exposure from that event. Obviously Fukushima is way more important than all of the world's air pollution deaths, deaths from extreme weather including heat exposure among people who can't afford air conditioning, and all of the world's ecosystems collapsing from fire, flood, heat, bleaching and acidification.

Stands to reason, no?

Have a nice Saturday afternoon and evening.

The magic of battery recycling as a strategy to address human rights abuse.

Here's a swell paper I ran across tonight all about how we can address the human rights issues connected with our "aren't batteries wonderful" fad that despite leaving the planet in flames, remains popular: Separation of Magnesium Impurity from Nickel and Cobalt Mixtures Using Ethylenediaminetetraacetic Acid and Temperature Optimization Hongting Liu, Monu Malik, Kyoung Hun Choi, and Gisele Azimi Industrial & Engineering Chemistry Research 2024 63 (13), 5542-5556

First some happy talk about how wonderfully the battery industry is growing, while the planet burns, clearing lots of land for solar and wind installations in the burned out areas:

However it seems like there may be a teeny, tiny, little problem with this, according to the paper:

My, my, my...you don't say?

How can we address this. RECYCLING!!!!!!!!

According to the first section excerpted, the battery industry that is designed, despite the second law of thermodynamics, to make the heretofore useless (if the goal is to slow climate change, which isn't happening - it's accelerating) and reliable solar and wind industry reliable, will have grown, by 352% in the "percent talk" that dominates the "solar and wind will save us" rhetoric about we all like to cite and chant. We can assume that, if battery life is 10 years - that some of the batteries manufactured in the $36.7B battery economy will still be operating and uncollected, and that all of the new batteries in the $127.9B will be in use for the next ten years, while the magic battery industry grows even more and more and more, as Buzz Lightyear used to say, "to infinity and beyond!"

Beyond infinity. Cool concept.

Don't worry, be happy. I'm sure that magic new mining areas will show up with oodles of cobalt ores in countries "with stronger labor and environmental protections..."

And of course, batteries will become cheaper and cheaper and cheaper as a result of these wonderful findings.

Sure thing.

And if some pesky areas of wilderness of extreme beauty are found over those areas in which oodles and oodles and oodles of cobalt and nickel are found, why I'm sure the "stronger environmental laws" will compensate for that as long as the mining companies promise that someday, long after the stock holders have withered away in their vast estates, the land will be "restored."

Happy, happy, happy.

Problem solved!

We are not going to mine our way out of climate change.

Have a wonderful weekend.

The Effect of Climate Change on Nuclear Reactor Cooling Will Be Studied at Argonne National Lab.

Argonne researching “climate-ready” nuclear plant design (Nuclear News, April 24, 2024.)Argonne will use funding from the Department of Energy’s Gateway for Accelerated Innovation in Nuclear to study climate-ready options for nuclear reactor designs. The goal is to develop a backup plan for nuclear cooling systems in the event that primary water sources are compromised by global warming—and Energy Northwest is partnering in the study to consider cooling options as river conditions may change near its Columbia nuclear power plant in Richland, Wash.

“It’s a very commendable way of thinking about climate change—to plan before doing something versus not thinking about it and trying to adapt afterwards,” Rao Kotamarthi, senior scientist in Argonne’s Environmental Science Division, said of Energy Northwest’s efforts. “A lot of people are confused about how to use the global climate data that exists, to make it actionable. At Argonne, we are working to provide very regional climate data in a form that industry can act on...”

...Cooling options: Vilim said local flowing water is the most economical and best way to cool a reactor, which is the current design of Washington’s nuclear plant. But changing climate models predict hotter, drier days in that region, which will affect the volume, flow, and temperature of the Columbia River.

“Dry cooling is not quite as efficient or as economical as wet cooling, but if wet cooling isn’t available, it’s your best option,” Vilim said.

Dry cooling uses ambient air circulated across a reactor’s heat exchangers instead of relying on a river or lake to conduct heat away from a reactor, using fans or physics similar to those in a house chimney or car radiator.

Recently I had an unpleasant conversation (is there any other kind?) with an antinuke who displayed phenomenal ignorance of nuclear technology (is there any other kind of antinuke other than those who display phenomenal ignorance of what they deign to criticize?) who pointed, albeit in a very, very stupid way, making up numbers as it went along, to the fact that nuclear plants, like many gas and coal plants, require cooling water.

It is true nuclear plants require cooling water, albeit nothing like the ignorant antinuke implied. This aspect is a function of the fact that the US nuclear fleet was largely built in the 7th and 8th decade of the 20th century, where Rankine devices were the "thing" for all thermal power plants, nuclear plants being a subset of thermal plants. All Rankine plants have low thermal efficiency, taken, as a rule of thumb, at around 33%.

Antinuke mysticism has left us with climate change, and in another case of arsonists complaining about forest fires, I suppose this reality, which is the worst (and only) major environmental effect associated with nuclear plants, will drive antinukes to help their fossil fuel friends by advocating for the closure of nuclear plants, rather than refurbishment.

The Biden administration, along with Governors like Gretchen Whitmer, is working to sustain and restore nuclear infrastructure. In terms of support for nuclear energy infrastructure - motivated by the best reason for doing so, climate change - the Biden administration is clearly the best in modern times. As a long time advocate of nuclear energy as our last best hope for addressing climate change - reactionary funding of so called "renewable energy" is a grotesque and extremely expensive failure at doing so - I am very pleased that the leader of our party has set aside the rote antinukism that has been our biggest policy stain, our most dubious position.

The absolute failure to address climate change by any means, can and will however, impact all thermal power plants, plants which overwhelmingly supply the nation's electricity, led in modern times by gas plants, followed by coal followed by nuclear. Although nuclear energy has been the most successful at ameliorating climate change of all technologies, accounting (as of this writing) more than a year's worth (35 billion tons) of the dangerous fossil fuel waste carbon dioxide being dumped by fossil fuel powered devices, it is vulnerable to climate change. Shutting nuclear infrastructure thus represents another potential feedback loop that must be arrested.

I'm glad to see one of our nation's premier national labs on the case.

It is my hope that many of the new designs in the new era of nuclear energy creativity that has returned to our country, will minimize the need for cooling by exergy recovery, that is putting the heat to use in what are called "heat exchanger networks" connected with the general approach known as "process intensification." This is an approach of recovery more of the energy of heat, simply efficiency improvements. I understand the new generation - the generation we screwed by my generation attacking nuclear energy while ignoring fossil fuels, as ass-backwards as you can get - is on the case; I note that young people represent the largest class of pronuclear activists.

Nevertheless, we have to preserve to the best of our ability our existing nuclear infrastructure, and it does seem that some retrofits will need to be designed to address the problem. We can no longer tolerate the German/New York/and other policy areas replacing clean nuclear energy with filthy and deadly fossil fuels. The Argonne effort is therefore worthy of applause.

Really 5000 "degrees," higher than the boiling point of zirconium metal?

The core at Fukushima vaporized? Who knew?

I note the switch from every nuclear reactor for this made up figure (with no units) of 5000 "degrees" to the big, big, big, big Bogeyman at Fukushima.

It would be interesting to learn of something called a reference from a reputable source for this extraordinary claim of 5000 "degrees" but I'm familiar, certainly, over all the years of antinukes cheering for the destruction of the planet by the application of fear and ignorance, with which only antivaxxers can compare in terms of death tolls, although Covid never killed roughly 19,000 people day like air pollution - not even counting climate change - does. In most cases antinukes don't have references. It's easier to make stuff up. However, if one relies on "making stuff up" one risks confronting people with legitimate real knowledge of the case.

Here is something called a reference, with textual commentary from a reputable source (the premier medical journal Lancet) for the number of people killed by air pollution egged on by antinukism:

Here is what it says about air pollution deaths in the 2019 Global Burden of Disease Survey, if one is too busy to open it oneself because one is too busy carrying on about Fukushima:

I keep this handy to point out how little antinukes care for stuff that matters, be it climate change, or the other effects of fossil fuel use, about which antinukes couldn't care less.

Here also, is something called a reference to the number of deaths caused by radiation at Fukushima, as opposed to the number of people killed by fear of radiation at the same event:

It's open sourced, but an excerpt is relevant:

I added the bold.

I also keep this text handy as well whenever people are carrying on insipidly about the big, big, big, big, big Bogeyman at Fukushima on their computers, powered by electricity that overwhelming comes from fossil fuels, with fossil fuel waste killing millions of people per year, roughly 80 to 90 million people since people began whining about Fukushima 13 years ago.

As for the commentary on how solar cells work, let me say this: I am used to contempt for science, in particular the laws of thermodynamics, among the "renewable energy will save us" crowd, although it's very clear after 50 years of such chanting, the world is not saved. Climate change is getting worse faster, and one reason is the trillions of dollars spent uselessly on solar and wind energy with the effect of entrenching the use of fossil fuels. It is antinuke fear and ignorance that has left the planet in flames. The evocation of wishful thinking about magical batteries is, as I often point out, an expression of contempt for the second law of thermodynamics, which is slightly arcane, but accessible to anyone of reasonable intelligence now that rational explanations exist, developed in the late 19th and early 20th centuries. It is unusual however, for antinukes, as badly educated as they generally are, to hold the first law of thermodynamics in contempt, as is happening in this exchange. It's a much simpler law.

As for nuclear energy:

Nuclear energy saves lives.

Here is something called a reference, coauthored by one of the world's leading (and famous) climate scientists, making the point, which I also keep handy:

Prevented Mortality and Greenhouse Gas Emissions from Historical and Projected Nuclear Power (Pushker A. Kharecha* and James E. Hansen Environ. Sci. Technol., 2013, 47 (9), pp 4889–4895)

The problem, as I see it, is a persistent refusal to have a simple sense of decency, for the elevation of dogma entrenching ignorance. I have seen here, among the antinukes, people citing the toxic idiot Helen Caldicott's unreferenced writings rather like right wing Christian Fundamentalists quoting the Book of Genesis in the Bible to explain the existence of the universe. One really doesn't want to believe that this sort of thing goes on, but it does.

No amount of information can change the rhetoric of a cult, not religious cults, not antinuke cults, not political cults like Trumpism, Nazism or the like. Cult thinking kills people, every time, all the time. The current case is no different.

Have a very pleasant Friday.

Meet General Shawn Harris, the Democrat who seeks to oust Marjorie Taylor Greene

Meet Shawn Harris, the Democrat who seeks to oust Marjorie Taylor GreeneHe connected with the Washington Blade last week to discuss his candidacy as a Democrat in Georgia’s deep-red 14th Congressional District — and why his promise to deliver for constituents who have been failed by their current representative is resonating with voters across the political spectrum...

... “I was military and I was high-level military,” he said. “So, it’s very easy for Republicans to Google my name and look at my history. My last assignment was in Israel. So that was a very high-level position.”

“The second piece,” Harris said, “is I raise cattle. I raise Red Angus cattle. I’m actually in my office looking out the window at them right now.”

He noted that agriculture dominates Georgia’s economy, particularly “cattle and wineries,” and also said he is an active member of the Georgia Cattlemen’s Association and U.S. Cattlemen’s Association.

“Most cattlemen, at least here in this area, are Republicans,” Harris said, so during the group’s meetings, “they get a chance to meet me just as Shawn, just as another cattleman.” At the same time, he said, “they come out here and visit me on the farm” and vice-versa...

I definitely hope this guy has a serious shot. That awful woman needs to be gone. She's a traitor, a fool, and an embarrassment to our country.

Formal "Justification" for a Fast Lead Cooled Nuclear Reactor Sought in the UK.

Justification sought for use of Newcleo reactor in the UKSubtitle:

Excerpts:

...The NIA noted that a justification decision is one of the required steps for the operation of a new nuclear technology in the UK, but it is not a permit or licence that allows a specific project to go ahead. "Instead, it is a generic decision based on a high-level evaluation of the potential benefits and detriments of the proposed new nuclear practice as a pre-cursor to future regulatory processes," it added...

..."Advanced reactors like Newcleo's lead-cooled fast reactor design have enormous potential to support the UK's energy security and net-zero transition, so we were delighted to apply for this decision," said NIA Chief Executive Tom Greatrex. "This is an opportunity for the UK government to demonstrate that it backs advanced nuclear technologies to support a robust clean power mix and to reinvigorate the UK's proud tradition of nuclear innovation. We look forward to engaging with the government and the public throughout this process and to further applications for new nuclear designs in the future..."

..."We continue to progress our UK plans at pace - aiming to deliver our first of a kind commercial reactor in the UK by 2033. We are but one player in the new nuclear renaissance and we look forward to working with government and the rest of the sector to develop the robust supply chain that can deliver the UK's ambition of 24 GW of nuclear power by 2050..."

24 GWe of nuclear power in Britain definitely falls into the category of "too little, too late." Hopefully the goals will morph into something more aggressive.

Things are pretty dire as I write. I routinely calculate from the data at Weekly average data for CO2 concentrations measured at Mauna Loa], setting the second derivative d2C/dt2 equal to the difference between the running 52 week average for the rate of CO2 at the current date (as of this writing 24.87 ppm/10 years) with that of the value in the first week of 2000 (15.36 ppm/10 years). I then integrate twice and substitute the boundary conditions, the concentration of CO2 reported this week, and the current running average for 10 year increases to obtain a quadratic. If the fossil fuel indulgent antinuke rhetoric continues to triumph, the resulting quadratic (as of this week) works out to a concentration of around 519 ppm "by 2050." I expect that 24 GW of additional nuclear power in Britain would barely cover the growth in electricity demand, especially if the very dubious "electrify everything" scheme is allowed to proceed on the dubious basis driven by the difficult to break but very dangerous belief that so called "renewable energy" will be something other than useless, which won't happen.

Britain, I should note, has the world's largest supply of isolated reactor grade plutonium, and thus a lead cooled fast reactor would allow for the use of this plutonium to create more plutonium which will certainly be needed if there is to remain any slim hope of avoiding even worse climate change than we are already experiencing as the result of antinukism's grand propaganda success that has left the planet in flames.

Lead coolants are very attractive to my mind for a number of reasons connected with process intensification - providing high temperatures to meet a number of missions not merely connected to producing electricity. Historically, these types of reactors have actually operated on LBE, lead bismuth eutectics, but I note that the use of pure lead will lead to the transmutation of some lead into the far less toxic element bismuth (an ingredient in the OTC product "Pepto-Bismol" ), thus generating LBE in situ. This would involve certain technical challenges, but I believe they might well be possible to address.

Have a pleasant work week.

An Interesting Experiment in Trying to Involve Journalists in Reporting Real Science Correctly.

One of the standard half-serious jokes I often repeat in my writings here when confronting articles from journalists linked and discussed here, typically about energy and the environment which are often sensationist and misleading, is that "One cannot get a degree in journalism if one has passed a college level science course with a grade of C or better."

Journalism, as is the case in the American political disaster in which journalists have been and are actively working to normalize an intellectually deficient and declining criminal fraud as a Presidential candidate, while demeaning as "too old" an activist highly functional President, is generally horrible where scientific issues are involved. One result of this marketing of bizarre ideology by journalists is climate change. I regard the major issue in climate change to be selective attention, for example, the magnification of the views of extreme scientific minorities in climate denial, or the demonization of nuclear energy, which I continue to insist is the last, best hope for humanity.

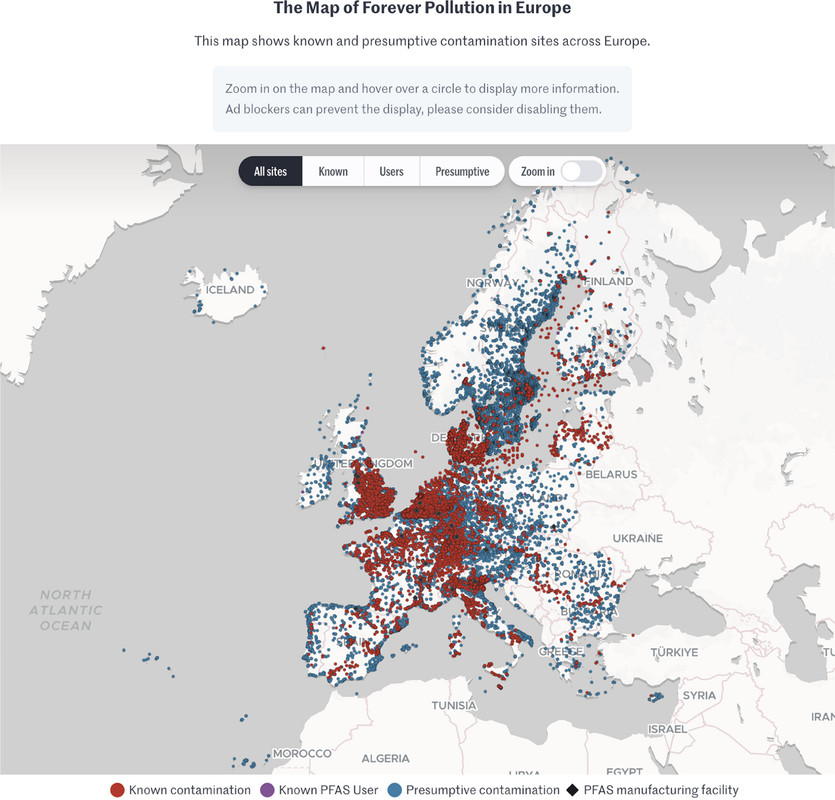

Thus it was with some interest that I came across this paper in a scientific journal in which scientists involved themselves with, and reviewed, journalism in connection with a very serious issue, PFAS (Per/Poly Fluorinated Alkylated Substances) contamination in Europe: PFAS Contamination in Europe: Generating Knowledge and Mapping Known and Likely Contamination with “Expert-Reviewed” Journalism Alissa Cordner, Phil Brown, Ian T. Cousins, Martin Scheringer, Luc Martinon, Gary Dagorn, Raphaëlle Aubert, Leana Hosea, Rachel Salvidge, Catharina Felke, Nadja Tausche, Daniel Drepper, Gianluca Liva, Ana Tudela, Antonio Delgado, Derrick Salvatore, Sarah Pilz, and Stéphane Horel Environmental Science & Technology 2024 58 (15), 6616-6627

Unfortunately I will not have much time to discuss this paper in much detail, but a few excerpts and a graphic follow.

From the introduction:

PFAS have been broadly detected in environmental media including surface water, groundwater, raw and finished drinking water, soil, air, landfill leachate, sewage sludge, food, and dust. (12?15) As examples, a recent analysis of tap water samples in the United States collected between 2016 and 2021 detected PFAS at 45% of tested locations, (16) and the 2019 French National Biomonitoring Programme measured serum levels of 17 PFAS and detected PFAS in 100% of the 993 participants. (17) It has been argued that PFAS have exceeded a “planetary boundary” of “widespread and poorly reversible risks associated even with low-level PFAS exposures” since global rainwater samples have PFAS levels above proposed regulatory limits designed to protect public health... (1)

...In addition to data gaps due to a lack of testing, the present study was motivated by two additional data gaps. First, unlike in the US where many PFAS testing datasets have been made public by federal and state governments, academic research groups, and environmental advocacy organizations, (19?22) there were very few publicly available data on PFAS contamination across the EU. Second, in most cases, legal protections for industry trade secrets and confidential business information prevent the public from knowing where PFAS are used and emitted. (23,24) An exception to this is the US Toxics Release Inventory program, which has required reporting since 2020 by some firms in a subset of industries for a small number of PFAS. (25) But in most cases, even if a specific company is known to produce and/or use PFAS, it is difficult or impossible for the public to know whether PFAS are used at individual facilities...

The authors enlisted five investigative journalists in five countries, Belgium, France, Germany, Italy, and The Netherlands, in the "FPP" or "Forever Pollution Project" and paired them with a team of PFAS scientists, noting " journalistic projects aiming at generating data in collaboration with scientists rather than reporting on already-produced data are not common."

Not common, indeed. I would expect that most scientists feel as I do, that science journalism (except generally when it appears in major science journals) that journalism is oblivious when reporting science.

Further down in the article:

The map which is interactive in the full paper, and, I think, at the link given in the caption but cannot be so here:

The caption:

I don't expect journalism to become a serious mirror of reality in my now short remaining lifetime, but we have to start somewhere; we have to try something, and I applaud this effort.

I wrote earlier today about PFAS and the very dangerous "hydrogen will save us" fantasy often hyped in the scientifically illiterate media earlier today:

A Brief Note on the Toxicology Associated With Hydrogen Fuel Cells.

Have a nice Sunday afternoon.

A Brief Note on the Toxicology Associated With Hydrogen Fuel Cells.

Intellectually, and morally, in my view, the hydrogen fuel fantasy should have been, and should be now, a non-starter, simply because of the laws of thermodynamics, notably the 2nd law. Like other "bait and switch" fantasies connected with diversion from the facts that fossil fuels can be rendered sustainable (or eliminated by wishful thinking) - including but not limited to sequestration, wind, and solar - the use of hydrogen fuels will make things worse, not better.

I laid out, in some detail, the facts connected with the nature of hydrogen as a front for the fossil fuel industry in a rather long post here: A Giant Climate Lie: When they're selling hydrogen, what they're really selling is fossil fuels.

This is also laid out in the scientific literature in the case of China, to which fossil fuel salespeople and salesbots who write here and elsewhere often appeal here to rebrand fossil fuels as "hydrogen:"

Subsidizing Grid-Based Electrolytic Hydrogen Will Increase Greenhouse Gas Emissions in Coal Dominated Power Systems Liqun Peng, Yang Guo, Shangwei Liu, Gang He, and Denise L. Mauzerall Environmental Science & Technology 2024 58 (12), 5187-5195.

One of the "bait and switch" tactics used to greenwash fossil fuels by talking about hydrogen is to talk about hydrogen fuel cells for cars. Hydrogen fuel cells actually are commercial products occasionally used for back up power for things like cell phone towers, although they are hardly mainstream.

One of the major environmental risks garnering increasing attention, is the issue of PFAS - "per (and poly) fluorinated alkylate substances, so called "forever chemicals" - which are known to have profound toxicological profiles. I know, but I don't believe that most people know, that the issue of PFAS is very much connected with hydrogen fuel cells, and that the widespread use of these, for instance in cars, would make this already intractable problem far worse.

A paper on strategies to degrade these has appeared in the scientific literature in an open source paper that I came across in my general reading. It is here:

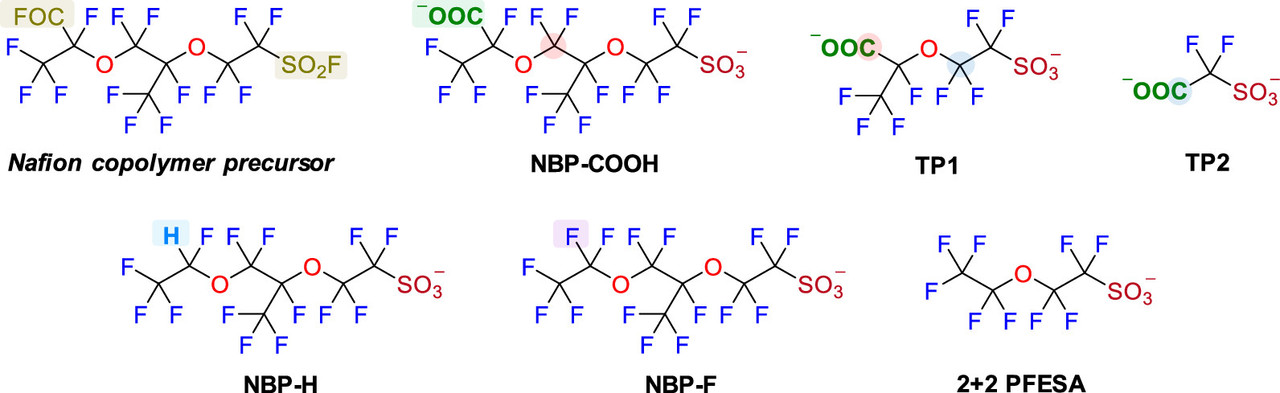

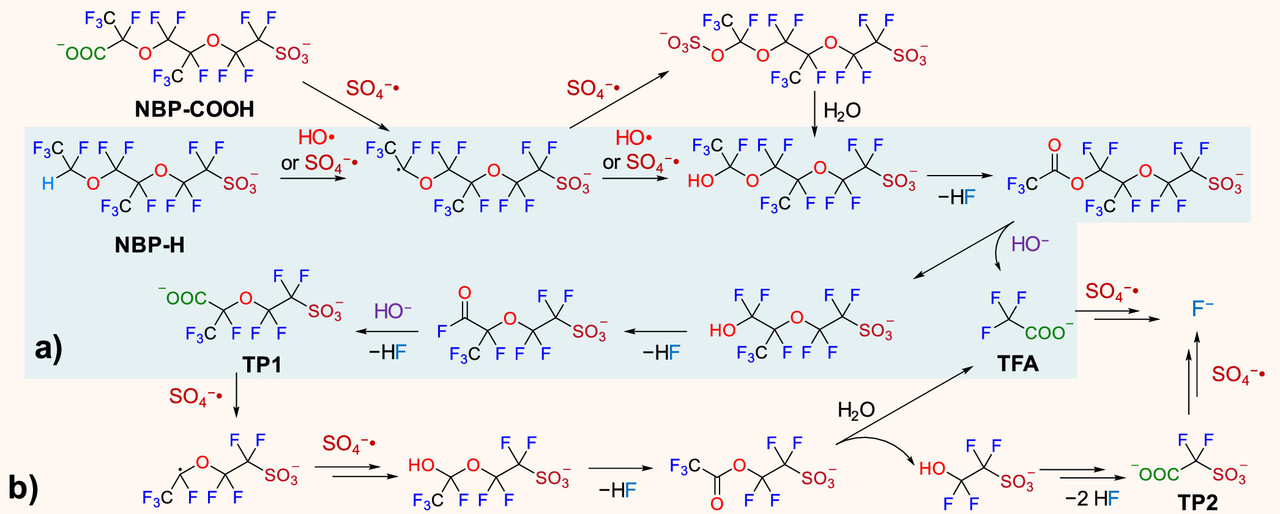

Oxidative Transformation of Nafion-Related Fluorinated Ether Sulfonates: Comparison with Legacy PFAS Structures and Opportunities of Acidic Persulfate Digestion for PFAS Precursor Analysis Zekun Liu, Bosen Jin, Dandan Rao, Michael J. Bentel, Tianchi Liu, Jinyu Gao, Yujie Men, and Jinyong Liu Environmental Science & Technology 2024 58 (14), 6415-6424

Again, the paper is open sourced, and will be generally arcane for people not familiar with scientific punctilios, but a relevant paragraph referring to fuel cells and the relationship to PFAS is this one:

Nafion, which is used in all commercial fuel cells, is the a fluoropolymer of PFAS compounds.

Two figures from the paper:

The caption:

The sulfate and hydroxide radicals in this pathway require energy to create. I regard oxidative radicals of this type as the best pathway for mineralizing (destroying) PFAS. The type of energy ideal for this purpose is ionizing radiation, high energy radiation in the UV to gamma range. The energy for doing this is best obtained from radioactive materials, the easiest access to which can be obtained from used nuclear fuels.

Have a pleasant Sunday.

Profile Information

Gender: MaleCurrent location: New Jersey

Member since: 2002

Number of posts: 33,527